Nodebox english linguistics library3/26/2023  But a lot of the words did seem to be truly unusual. The main false positives appeared to be British spellings, skurried, neighbour, curtsey, Roman numerals, and real words inexplicably missing from the original corpus, kid, proud. This produced the shortest list yet, at 71 words. lower () lemmatized_forms = for form in lemmatized_forms : if form != word : return form return word

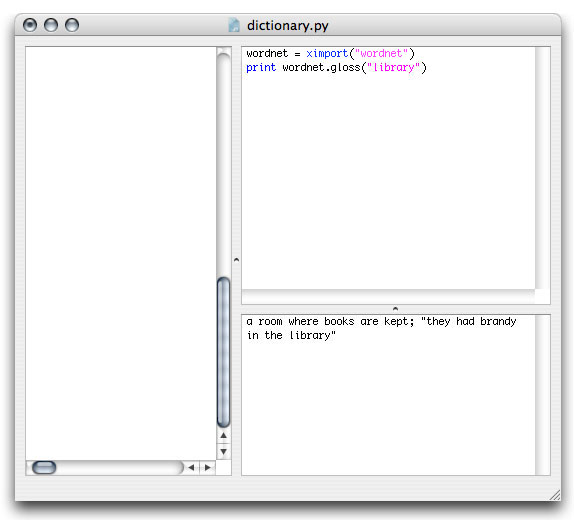

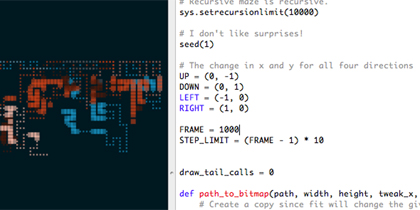

It is meant to reduce words to their " lemma," the "the canonical, dictionary or citation form of a word." Sounds promising.ĭef basic_word_form ( word ): word = word. Some of the outputs: trembl, turtl, difficulti. The stemmers didn't seem to do exactly what I needed-they often lobbed off conjugations or irregular plurals such that what remained was not an English word. These returned lists of 187 and 188 words, respectively. SnowballStemmer ( 'english' ) return stemmer. difference ( english_vocab )) def basic_word_form_snowball ( word ): word = word. difference ( english_vocab ) basic_forms = set ( basic_word_form_snowball ( w ) for w in unusual ) return sorted ( basic_forms. stem ( word ) def unusual_words_snowball ( text ): text_vocab = set ( w. difference ( english_vocab )) def basic_word_form_porter ( word ): word = word. difference ( english_vocab ) basic_forms = set ( basic_word_form_porter ( w ) for w in unusual ) return sorted ( basic_forms. I rewrote unusual_words as follows:ĭef unusual_words_porter ( text ): text_vocab = set ( w. Since this method is much slower than the simple set difference in the original method, I implemented it as a second pass, only to be used on words that were not found in the first diff. For example, is_verb? did not return true for all conjugated verbs and singular had some unexpected results, so I ended up running the infinitive and singular methods on everything, and returning them if they returned a non-empty result that was different from the starting word. To this end, I tried the NodeBox English Linguistics Library. Singular, in the case of nouns, and infinitive, in the case of verbs. I wanted to get the words into their most "basic" forms. eggs) and the verbs were conjugated (e.g.

But this corpus had a quarter-million words in it, surely it should have these incredibly common words? Then I noticed something about the words. I got a little frustrated and tried using some other corpuses.

Ok, so maybe this was going to be harder than I thought. Plenty of them seemed to be pretty common: eggs, grins, happens, presented.

That seemed like an awful lot, so I scanned through some of them. difference ( english_vocab ) return sorted ( unusual ) The book is kind enough to supply a function that should do this very thing:ĭef unusual_words ( text ): text_vocab = set ( w. So, armed with the text of Alice in Wonderland, I started on my quest (pedantic note: "Jabberwocky" in fact appears in Through the Looking Glass, so going into this I wasn't sure what, if any, invented words I should expect to find in Alice). With that in mind, I was thinking about how to identify uncommon or invented words in a text. I've been working through the book Natural Language Processing in Python and also love Carroll's use of language, including his tendencies to just invent words and rely on context and sound symbolism to make them comprehensible. Going down this rabbit hole, I got obsessed with this adjacency matrix of characters in Les Misérables and wondering what else I might be able to look into about my favorite books with code. I'm currently spending the summer at the Recurse Center doing my own self-directed learning, so it seemed like a great time to start examining the overlap in this Venn diagram. As both a programming nerd and a literature nerd □ I've recently been trying to find more ways to unite the two.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed